Entry

Reader's guide

Entries A-Z

Subject index

Interrater Agreement

Researchers often attempt to evaluate the consensus or agreement of judgments or decisions provided by a group of raters (or judges, observers, experts, diagnostic tools). The nature of the judgments can be nominal (e.g., Republicans/Democrats/Independents, or Yes/No); ordinal (e.g., low, medium, high); interval; or ratio (e.g., Target A is twice as heavy as Target B). Whatever the type of judgments, the common goal of interrater agreement indexes is to assess to what degree raters agree about the precise values of one or more attributes of a target; in other words, how much their ratings are interchangeable. Interrater agreement has been consistently confused with INTERRATER RELIABLITY both in practice and in research (Kozlowski & Hattrup, 1992; Tinsley & Weiss, 1975). These terms represent different concepts and require different measurement indexes. For instance, three reviewers, A, B, and C, rate a manuscript on four dimensions—clarity of writing, comprehensiveness of literature review, methodological adequacy, and contribution to the field—with six response categories ranging from 1(unacceptable)to 6(outstanding). The ratings of reviewers A, B, and C on the four dimensions are (1, 2, 3, 4), (2, 3, 4, 5), and (3, 4, 5, 6), respectively. The data clearly indicate that these reviewers completely disagree with one another (i.e., zero interrater agreement), although their ratings are perfectly consistent (i.e., perfect interrater reliability) across the dimensions.

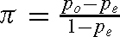

There are many indexes of interrater agreement developed for different circumstances, such as different types of judgments (nominal, ordinal, etc.) as well as varying numbers of raters or attributes of the target being rated. Examples of these indexes are percentage agreement; STANDARD DEVIATION of the rating; STANDARD ERROR of the mean; Scott's (1955) π; Cohen's (1960) kappa (κ) and Cohen's (1968) weighted kappa (κw); Lawlis and Lu's (1972) χ2 test; Lawshe's (1975) Content Validity Ratio (CVR) and Content Validity Index (CVI); Tinsley and Weiss's (1975) T index; James, Demaree, and Wolf's (1984) rWG(J); and Lindell, Brandt, and Whitney's (1999) r*WG(J). In the remaining section, we will review Scott's π, Cohen's κ and weighted κw, Tinsley and Weiss's T index, Lawshe's CVR and CVI, James et al.'s rWG(J), and Lindell et al.'s r*WG(J). These indexes have been widely used, and their corresponding NULL HYPOTHESIS tests have been well developed. Scott's π applies to categorical decisions about an attribute for a group of ratees based on two independent raters. It is defined as  and practically ranges from 0 to 1 (although negative values can be obtained), where po and pe represent the percentage of observed agreement between two raters and the percentage of expected agreement by chance, respectively. Determined by the number of judgmental categories and the frequencies of categories used by both raters, pe is defined as the sum of the squared proportions of overall categories,

and practically ranges from 0 to 1 (although negative values can be obtained), where po and pe represent the percentage of observed agreement between two raters and the percentage of expected agreement by chance, respectively. Determined by the number of judgmental categories and the frequencies of categories used by both raters, pe is defined as the sum of the squared proportions of overall categories,

where k is the total number of categories and pi is the proportion of judgments that falls in the ith category.

Cohen's κ, the most widely used index, assesses agreement between two or more independent raters who make categorical decisions regarding an attribute. Similar to Scott's π, Cohen's κ is defined as κ =  and often ranges from 0 to 1. However, pe in Cohen's κ is operationalized differently, instead as the sum of joint probabilities of the MARGINALS in the CONTINGENCY TABLE across all categories. In contrast to Cohen's κ, Cohen's κw takes disagreements into consideration. In some practical situations, such as personnel selection, disagreement between “definitely succeed” and “likely succeed” would be less critical than “definitely succeed” and “definitely fail.” Both κ and κw tend to overcorrect for the chance of agreement when the number of raters increases.

and often ranges from 0 to 1. However, pe in Cohen's κ is operationalized differently, instead as the sum of joint probabilities of the MARGINALS in the CONTINGENCY TABLE across all categories. In contrast to Cohen's κ, Cohen's κw takes disagreements into consideration. In some practical situations, such as personnel selection, disagreement between “definitely succeed” and “likely succeed” would be less critical than “definitely succeed” and “definitely fail.” Both κ and κw tend to overcorrect for the chance of agreement when the number of raters increases.

...

- Analysis of Variance

- Association and Correlation

- Association

- Association Model

- Asymmetric Measures

- Biserial Correlation

- Canonical Correlation Analysis

- Correlation

- Correspondence Analysis

- Intraclass Correlation

- Multiple Correlation

- Part Correlation

- Partial Correlation

- Pearson's Correlation Coefficient

- Semipartial Correlation

- Simple Correlation (Regression)

- Spearman Correlation Coefficient

- Strength of Association

- Symmetric Measures

- Basic Qualitative Research

- Basic Statistics

- F Ratio

- N(n)

- t-Test

- X¯

- Y Variable

- z-Test

- Alternative Hypothesis

- Average

- Bar Graph

- Bell-Shaped Curve

- Bimodal

- Case

- Causal Modeling

- Cell

- Covariance

- Cumulative Frequency Polygon

- Data

- Dependent Variable

- Dispersion

- Exploratory Data Analysis

- Frequency Distribution

- Histogram

- Hypothesis

- Independent Variable

- Measures of Central Tendency

- Median

- Null Hypothesis

- Pie Chart

- Regression

- Standard Deviation

- Statistic

- Causal Modeling

- Discourse/Conversation Analysis

- Econometrics

- Epistemology

- Ethnography

- Evaluation

- Event History Analysis

- Experimental Design

- Factor Analysis and Related Techniques

- Feminist Methodology

- Generalized Linear Models

- Historical/Comparative

- Interviewing in Qualitative Research

- Latent Variable Model

- Life History/Biography

- Log-Linear Models (Categorical Dependent Variables)

- Longitudinal Analysis

- Mathematics and Formal Models

- Measurement Level

- Measurement Testing and Classification

- Multilevel Analysis

- Multiple Regression

- Qualitative Data Analysis

- Sampling in Qualitative Research

- Sampling in Surveys

- Scaling

- Significance Testing

- Simple Regression

- Survey Design

- Time Series

- ARIMA

- Box-Jenkins Modeling

- Cointegration

- Detrending

- Durbin-Watson Statistic

- Error Correction Models

- Forecasting

- Granger Causality

- Interrupted Time-Series Design

- Intervention Analysis

- Lag Structure

- Moving Average

- Periodicity

- Serial Correlation

- Spectral Analysis

- Time-Series Cross-Section (TSCS) Models

- Time-Series Data (Analysis/Design)

- Trend Analysis

- Loading...

Get a 30 day FREE TRIAL

-

Watch videos from a variety of sources bringing classroom topics to life

-

Read modern, diverse business cases

-

Explore hundreds of books and reference titles

Sage Recommends

We found other relevant content for you on other Sage platforms.

Have you created a personal profile? Login or create a profile so that you can save clips, playlists and searches