Entry

Reader's guide

Entries A-Z

Subject index

Box-Jenkins Modeling

The methodology associated with the names of Box and Jenkins applies to time-series data, where observations occur at equally spaced intervals (Box & Jenkins, 1976). Unlike DETERMINISTIC MODELS, Box-Jenkins models treat a time series as the realization of a STOCHASTIC process, specifically as an ARIMA (autoregressive, integrated, moving average) process. The main applications of Box-Jenkins models are forecasting future observations of a time series, determining the effect of an intervention in an ongoing time series (INTERVENTION ANALYSIS), and estimating dynamic input-output relationship (transfer function analysis).

Arima Models

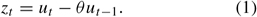

The simplest version of an ARIMA model assumes that observations of a time series (zt) are generated by random shocks (ut) that carry over only to the next time point, according to the moving-average parameter (θ). This is a first-order moving-average model, MA(1), which has the form (ignore the negative sign of the parameter)

This process is only one short step away from pure randomness, called “white noise.” The memory today (t) extends only to yesterday's news (t − 1), not to any before then. Even though a moving-average model can be easily extended, if necessary, to accommodate a larger set of prior random shocks, say ut−2 or ut−3, there soon comes the point where the MA framework proves too cumbersome. A more practical way of handling the accumulated, though discounted, shocks of the past is by way of the autoregressive model. That takes us to the “AR” part of ARIMA:

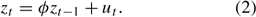

In this process, AR(1), the accumulated past (zt−1), not just the most recent random shock (ut−1), carries over from one period to the next, according to the autoregressive parameter (φ). But not all of it does. The autoregressive process requires some leakage in the transition from time t − 1 to time t. As long as φ stays below 1.0, the AR(1) process meets the requirements of stationarity (constant mean level, constant variance, and constant covariance between observations).

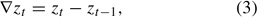

Many time series in real life, of course, are not stationary. They exhibit trends (long-term growth or decline) or wander freely, like a RANDOM WALK. The observations of such time series must first be transformed into stationary ones before autoregressive and/or moving-average components can be estimated. The standard procedure is to difference the time series zt,

and to determine whether the resulting series (∇zt) meets the requirements of stationarity. If so, the original series (zt) is considered integrated at order 1. That is what the “I” in ARIMA refers to. I simply counts the number of times a time series must be differenced to achieve stationarity. One difference (I = 1) is sufficient for most nonstationary series.

The analysis of a stationary time series then proceeds from model identification, through parameter estimation, to diagnostic checking. To identify the dynamic of a stationary series as either autoregressive (AR) or moving average (MA), or as a combination of both—plus the order of those components—one examines the AUTOCORRELATIONS and partial autocorrelations of the time series up to a sufficient number of lags. Any ARMA process leaves its characteristic signature in those correlation functions (ACF and PACF). The parameters of identified models are then estimated through iterative maximum likelihood estimation procedures. Whether or not this model is adequate for capturing the ARMA dynamic of the time series depends on the error diagnostic. The Ljung-Box Q-statistic is a widely used summary test of white-noise residuals.

...

- Analysis of Variance

- Association and Correlation

- Association

- Association Model

- Asymmetric Measures

- Biserial Correlation

- Canonical Correlation Analysis

- Correlation

- Correspondence Analysis

- Intraclass Correlation

- Multiple Correlation

- Part Correlation

- Partial Correlation

- Pearson's Correlation Coefficient

- Semipartial Correlation

- Simple Correlation (Regression)

- Spearman Correlation Coefficient

- Strength of Association

- Symmetric Measures

- Basic Qualitative Research

- Basic Statistics

- F Ratio

- N(n)

- t-Test

- X¯

- Y Variable

- z-Test

- Alternative Hypothesis

- Average

- Bar Graph

- Bell-Shaped Curve

- Bimodal

- Case

- Causal Modeling

- Cell

- Covariance

- Cumulative Frequency Polygon

- Data

- Dependent Variable

- Dispersion

- Exploratory Data Analysis

- Frequency Distribution

- Histogram

- Hypothesis

- Independent Variable

- Measures of Central Tendency

- Median

- Null Hypothesis

- Pie Chart

- Regression

- Standard Deviation

- Statistic

- Causal Modeling

- Discourse/Conversation Analysis

- Econometrics

- Epistemology

- Ethnography

- Evaluation

- Event History Analysis

- Experimental Design

- Factor Analysis and Related Techniques

- Feminist Methodology

- Generalized Linear Models

- Historical/Comparative

- Interviewing in Qualitative Research

- Latent Variable Model

- Life History/Biography

- Log-Linear Models (Categorical Dependent Variables)

- Longitudinal Analysis

- Mathematics and Formal Models

- Measurement Level

- Measurement Testing and Classification

- Multilevel Analysis

- Multiple Regression

- Qualitative Data Analysis

- Sampling in Qualitative Research

- Sampling in Surveys

- Scaling

- Significance Testing

- Simple Regression

- Survey Design

- Time Series

- ARIMA

- Box-Jenkins Modeling

- Cointegration

- Detrending

- Durbin-Watson Statistic

- Error Correction Models

- Forecasting

- Granger Causality

- Interrupted Time-Series Design

- Intervention Analysis

- Lag Structure

- Moving Average

- Periodicity

- Serial Correlation

- Spectral Analysis

- Time-Series Cross-Section (TSCS) Models

- Time-Series Data (Analysis/Design)

- Trend Analysis

- Loading...

Get a 30 day FREE TRIAL

-

Watch videos from a variety of sources bringing classroom topics to life

-

Read modern, diverse business cases

-

Explore hundreds of books and reference titles

Sage Recommends

We found other relevant content for you on other Sage platforms.

Have you created a personal profile? Login or create a profile so that you can save clips, playlists and searches