Entry

Reader's guide

Entries A-Z

Subject index

Asymptotic Properties

To make a STATISTICAL INFERENCE, we need to know the SAMPLING DISTRIBUTION of our ESTIMATOR. In general, the exact or finite sample distribution will not be known. The asymptotic properties provide us with the approximate distribution of our estimator by looking at its behavior as a data set gets arbitrarily large (i.e., infinite).

Asymptotic results are based on the sample size n. Let xn be a RANDOM VARIABLE indexed by the size of the SAMPLE. To define consistency, we will need to define the notation of convergence in PROBABILITY. We will say that xn converges in probability to a constant c if limn → ∞ Prob(|xn − c| > ε) = 0 for any positive ε. Convergence in probability implies that the values that the variable may take that are not close to c become increasingly unlikely as the sample size n increases. Convergence in probability is usually denoted by plim xn = c. We can then say that an ESTIMATOR θ^n of a parameter θ is a consistent estimator if and only if plim θ^ = θ. Roughly speaking, this means that as the sample size gets large, the estimator's sampling distribution piles up on the true value.

Although consistency is an important characteristic of an estimator, to conduct statistical inference, we will need to know its sampling distribution. To derive an estimator's sampling distribution, we will need to define the notation of a limiting distribution. Suppose that F(x) is the cumulative distribution function (cdf) of some random variable x. Furthermore, let xn be a sequence of random variables indexed by sample size with cdf Fn(x). We say that xn converges in distribution to x if limn → ∞ |Fn(x) − Fn(x)| = 0.

We will denote convergence in distribution by  In words, this says that the distribution of xn gets close to the distribution of x as the sample size gets large. This convergence in distribution, along with the central limit theorem, will allow us to construct the asymptotic distribution of an estimator.

In words, this says that the distribution of xn gets close to the distribution of x as the sample size gets large. This convergence in distribution, along with the central limit theorem, will allow us to construct the asymptotic distribution of an estimator.

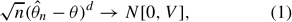

An asymptotic distribution is a distribution that is used to approximate the true finite sample distribution of a random variable. Suppose that θ^n is a consistent estimate of a parameter θ. Then, using the central limit theorem, the asymptotic distribution of θ^n is

where N[] denotes the cdf of the NORMAL DISTRIBUTION, and V is the asymptotic covariance matrix. This implies that θ^n is distributed as  . This is a very striking result. It says that, regardless of the true underlying finite sample distribution, we can approximate the distribution of the estimator by the Normal distribution as the sample size gets large. We can thus use the Normal distribution to conduct HYPOTHESIS TESTING as if we knew the true sampling distribution.

. This is a very striking result. It says that, regardless of the true underlying finite sample distribution, we can approximate the distribution of the estimator by the Normal distribution as the sample size gets large. We can thus use the Normal distribution to conduct HYPOTHESIS TESTING as if we knew the true sampling distribution.

However, it must be noted that the asymptotic distribution is only an approximation, although one that will improve with sample size; thus, the size, power, and other properties of the hypothesis test are only approximate. Unfortunately, we cannot, in general, say how large a sample needs to be to have confidence in using the asymptotic distribution for statistical inference. One must rely on previous experience, bootstrapping simulations, or Monte Carlo experiments to provide an indication of how useful the asymptotic approximations are in any given situation.

...

- Analysis of Variance

- Association and Correlation

- Association

- Association Model

- Asymmetric Measures

- Biserial Correlation

- Canonical Correlation Analysis

- Correlation

- Correspondence Analysis

- Intraclass Correlation

- Multiple Correlation

- Part Correlation

- Partial Correlation

- Pearson's Correlation Coefficient

- Semipartial Correlation

- Simple Correlation (Regression)

- Spearman Correlation Coefficient

- Strength of Association

- Symmetric Measures

- Basic Qualitative Research

- Basic Statistics

- F Ratio

- N(n)

- t-Test

- X¯

- Y Variable

- z-Test

- Alternative Hypothesis

- Average

- Bar Graph

- Bell-Shaped Curve

- Bimodal

- Case

- Causal Modeling

- Cell

- Covariance

- Cumulative Frequency Polygon

- Data

- Dependent Variable

- Dispersion

- Exploratory Data Analysis

- Frequency Distribution

- Histogram

- Hypothesis

- Independent Variable

- Measures of Central Tendency

- Median

- Null Hypothesis

- Pie Chart

- Regression

- Standard Deviation

- Statistic

- Causal Modeling

- Discourse/Conversation Analysis

- Econometrics

- Epistemology

- Ethnography

- Evaluation

- Event History Analysis

- Experimental Design

- Factor Analysis and Related Techniques

- Feminist Methodology

- Generalized Linear Models

- Historical/Comparative

- Interviewing in Qualitative Research

- Latent Variable Model

- Life History/Biography

- Log-Linear Models (Categorical Dependent Variables)

- Longitudinal Analysis

- Mathematics and Formal Models

- Measurement Level

- Measurement Testing and Classification

- Multilevel Analysis

- Multiple Regression

- Qualitative Data Analysis

- Sampling in Qualitative Research

- Sampling in Surveys

- Scaling

- Significance Testing

- Simple Regression

- Survey Design

- Time Series

- ARIMA

- Box-Jenkins Modeling

- Cointegration

- Detrending

- Durbin-Watson Statistic

- Error Correction Models

- Forecasting

- Granger Causality

- Interrupted Time-Series Design

- Intervention Analysis

- Lag Structure

- Moving Average

- Periodicity

- Serial Correlation

- Spectral Analysis

- Time-Series Cross-Section (TSCS) Models

- Time-Series Data (Analysis/Design)

- Trend Analysis

- Loading...

Get a 30 day FREE TRIAL

-

Watch videos from a variety of sources bringing classroom topics to life

-

Read modern, diverse business cases

-

Explore hundreds of books and reference titles

Sage Recommends

We found other relevant content for you on other Sage platforms.

Have you created a personal profile? Login or create a profile so that you can save clips, playlists and searches