Entry

Reader's guide

Entries A-Z

Subject index

Root Mean Square Error

The term root mean square error (RMSE) is the square root of mean squared error (MSE). RMSE measures the differences between values predicted by a hypothetical model and the observed values. In other words, it measures the quality of the fit between the actual data and the predicted model. RMSE is one of the most frequently used measures of the goodness of fit of generalized regression models.

RMSE for Regression

In the application of regression models, unless the relationship or correlation is perfect, the predicted values are more or less different from the actual observations. These differences are prediction errors or residuals. These residuals are measured by the vertical distances between the actual values and the regression line. Large distances are indicative of large errors. However, for a given fitted regression line, the average or the sum of the residuals equals zero, as the overestimation of some scores can be canceled out by underestimations of other scores. Thus, a common practice in statistical work is to square the residuals to indicate the magnitude of absolute differences. Indeed, a primary goal in linear regression is to minimize the sum of the squared prediction errors to best model the relations among variables.

To acquire RMSE, one can square and average the individual prediction errors over the whole sample. The average of all the squared errors is the MSE. The MSE, in essence, is an average of the spread of the data around the regression line and reflects how big the “typical” prediction error is. Furthermore, the MSE can be “square rooted” to obtain RMSE. RMSE is used to represent prediction errors in the same units as the data, rather than in squared units.

Mathematical Definition

Since RMSE is the square root of MSE, a thorough knowledge of MSE is important to an understanding of the mathematical definition and properties of RMSE.

MSE

MSE is the mean of the overall squared prediction errors. It takes into account the bias, or the tendency of the estimator to overestimate or underestimate the actual values, and the variability of the estimator, or the standard error.

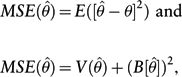

Suppose that

where V(

An MSE of zero means that the estimator

RMSE

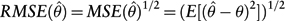

RMSE is the average vertical distance of the actual data points from the fitted line. Mathematically, RMSE is the square root of MSE.

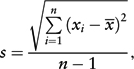

For an unbiased estimator, RMSE is equivalent to the standard error of the estimate, and it can be calculated using the formula

where n denotes the size of the sample or the number of observations; Xi represents individual values, and

In many statistical procedures, such as analysis of variance and linear regression, the RMSE values are used to determine the statistical significance of the variables or factors under study. RMSE is also used in regression models to determine how many predictors to include in a model for a particular sample.

...

- Descriptive Statistics

- Distributions

- Graphical Displays of Data

- Hypothesis Testing

- Alternative Hypotheses

- Beta

- Critical Value

- Decision Rule

- Hypothesis

- Nondirectional Hypotheses

- Nonsignificance

- Null Hypothesis

- One-Tailed Test

- p Value

- Power

- Power Analysis

- Significance Level, Concept of

- Significance Level, Interpretation and Construction

- Significance, Statistical

- Two-Tailed Test

- Type I Error

- Type II Error

- Type III Error

- Important Publications

- “Coefficient Alpha and the Internal Structure of Tests”

- “Convergent and Discriminant Validation by the Multitrait-Multimethod Matrix”

- “Meta-Analysis of Psychotherapy Outcome Studies”

- “On the Theory of Scales of Measurement”

- “Probable Error of a Mean, The”

- “Psychometric Experiments”

- “Sequential Tests of Statistical Hypotheses”

- “Technique for the Measurement of Attitudes, A”

- “Validity”

- Aptitudes and Instructional Methods

- Doctrine of Chances, The

- Logic of Scientific Discovery, The

- Nonparametric Statistics for the Behavioral Sciences

- Probabilistic Models for Some Intelligence and Attainment Tests

- Statistical Power Analysis for the Behavioral Sciences

- Teoria Statistica Delle Classi e Calcolo Delle Probabilità

- Inferential Statistics

- Association, Measures of

- Coefficient of Concordance

- Coefficient of Variation

- Coefficients of Correlation, Alienation, and Determination

- Confidence Intervals

- Margin of Error

- Nonparametric Statistics

- Odds Ratio

- Parameters

- Parametric Statistics

- Partial Correlation

- Pearson Product-Moment Correlation Coefficient

- Polychoric Correlation Coefficient

- Q-Statistic

- R2

- Randomization Tests

- Regression Coefficient

- Semipartial Correlation Coefficient

- Spearman Rank Order Correlation

- Standard Error of Estimate

- Standard Error of the Mean

- Student's t Test

- Unbiased Estimator

- Weights

- Item Response Theory

- Mathematical Concepts

- Measurement Concepts

- Organizations

- Publishing

- Qualitative Research

- Reliability of Scores

- Research Design Concepts

- Aptitude-Treatment Interaction

- Cause and Effect

- Concomitant Variable

- Confounding

- Control Group

- Interaction

- Internet-Based Research Method

- Intervention

- Matching

- Natural Experiments

- Network Analysis

- Placebo

- Replication

- Research

- Research Design Principles

- Treatment(s)

- Triangulation

- Unit of Analysis

- Yoked Control Procedure

- Research Designs

- A Priori Monte Carlo Simulation

- Action Research

- Adaptive Designs in Clinical Trials

- Applied Research

- Behavior Analysis Design

- Block Design

- Case-Only Design

- Causal-Comparative Design

- Cohort Design

- Completely Randomized Design

- Cross-Sectional Design

- Crossover Design

- Double-Blind Procedure

- Ex Post Facto Study

- Experimental Design

- Factorial Design

- Field Study

- Group-Sequential Designs in Clinical Trials

- Laboratory Experiments

- Latin Square Design

- Longitudinal Design

- Meta-Analysis

- Mixed Methods Design

- Mixed Model Design

- Monte Carlo Simulation

- Nested Factor Design

- Nonexperimental Design

- Observational Research

- Panel Design

- Partially Randomized Preference Trial Design

- Pilot Study

- Pragmatic Study

- Pre-Experimental Designs

- Pretest-Posttest Design

- Prospective Study

- Quantitative Research

- Quasi-Experimental Design

- Randomized Block Design

- Repeated Measures Design

- Response Surface Design

- Retrospective Study

- Sequential Design

- Single-Blind Study

- Single-Subject Design

- Split-Plot Factorial Design

- Thought Experiments

- Time Studies

- Time-Lag Study

- Time-Series Study

- Triple-Blind Study

- True Experimental Design

- Wennberg Design

- Within-Subjects Design

- Zelen's Randomized Consent Design

- Research Ethics

- Research Process

- Clinical Significance

- Clinical Trial

- Cross-Validation

- Data Cleaning

- Delphi Technique

- Evidence-Based Decision Making

- Exploratory Data Analysis

- Follow-Up

- Inference: Deductive and Inductive

- Last Observation Carried Forward

- Planning Research

- Primary Data Source

- Protocol

- Q Methodology

- Research Hypothesis

- Research Question

- Scientific Method

- Secondary Data Source

- Standardization

- Statistical Control

- Type III Error

- Wave

- Research Validity Issues

- Bias

- Critical Thinking

- Ecological Validity

- Experimenter Expectancy Effect

- External Validity

- File Drawer Problem

- Hawthorne Effect

- Heisenberg Effect

- Internal Validity

- John Henry Effect

- Mortality

- Multiple Treatment Interference

- Multivalued Treatment Effects

- Nonclassical Experimenter Effects

- Order Effects

- Placebo Effect

- Pretest Sensitization

- Random Assignment

- Reactive Arrangements

- Regression to the Mean

- Selection

- Sequence Effects

- Threats to Validity

- Validity of Research Conclusions

- Volunteer Bias

- White Noise

- Sampling

- Cluster Sampling

- Convenience Sampling

- Demographics

- Error

- Exclusion Criteria

- Experience Sampling Method

- Nonprobability Sampling

- Population

- Probability Sampling

- Proportional Sampling

- Quota Sampling

- Random Sampling

- Random Selection

- Sample

- Sample Size

- Sample Size Planning

- Sampling

- Sampling and Retention of Underrepresented Groups

- Sampling Error

- Stratified Sampling

- Systematic Sampling

- Scaling

- Software Applications

- Statistical Assumptions

- Statistical Concepts

- Autocorrelation

- Biased Estimator

- Cohen's Kappa

- Collinearity

- Correlation

- Criterion Problem

- Critical Difference

- Data Mining

- Data Snooping

- Degrees of Freedom

- Directional Hypothesis

- Disturbance Terms

- Error Rates

- Expected Value

- Fixed-Effects Models

- Inclusion Criteria

- Influence Statistics

- Influential Data Points

- Intraclass Correlation

- Latent Variable

- Likelihood Ratio Statistic

- Loglinear Models

- Main Effects

- Markov Chains

- Method Variance

- Mixed- and Random-Effects Models

- Models

- Multilevel Modeling

- Odds

- Omega Squared

- Orthogonal Comparisons

- Outlier

- Overfitting

- Pooled Variance

- Precision

- Quality Effects Model

- Random-Effects Models

- Regression Artifacts

- Regression Discontinuity

- Residuals

- Restriction of Range

- Robust

- Root Mean Square Error

- Rosenthal Effect

- Serial Correlation

- Shrinkage

- Simple Main Effects

- Simpson's Paradox

- Sums of Squares

- Statistical Procedures

- Accuracy in Parameter Estimation

- Analysis of Covariance (ANCOVA)

- Analysis of Variance (ANOVA)

- Barycentric Discriminant Analysis

- Bivariate Regression

- Bonferroni Procedure

- Bootstrapping

- Canonical Correlation Analysis

- Categorical Data Analysis

- Confirmatory Factor Analysis

- Contrast Analysis

- Descriptive Discriminant Analysis

- Discriminant Analysis

- Dummy Coding

- Effect Coding

- Estimation

- Exploratory Factor Analysis

- Greenhouse-Geisser Correction

- Hierarchical Linear Modeling

- Holm's Sequential Bonferroni Procedure

- Jackknife

- Latent Growth Modeling

- Least Squares, Methods of

- Logistic Regression

- Mean Comparisons

- Missing Data, Imputation of

- Multiple Regression

- Multivariate Analysis of Variance (MANOVA)

- Pairwise Comparisons

- Path Analysis

- Post Hoc Analysis

- Post Hoc Comparisons

- Principal Components Analysis

- Propensity Score Analysis

- Sequential Analysis

- Stepwise Regression

- Structural Equation Modeling

- Survival Analysis

- Trend Analysis

- Yates's Correction

- Statistical Tests

- Bartlett's Test

- Behrens-Fisher t′ Statistic

- Chi-Square Test

- Duncan's Multiple Range Test

- Dunnett's Test

- F Test

- Fisher's Least Significant Difference Test

- Friedman Test

- Honestly Significant Difference (HSD) Test

- Kolmogorov-Smirnov Test

- Kruskal-Wallis Test

- Mann-Whitney U Test

- Mauchly Test

- McNemar's Test

- Multiple Comparison Tests

- Newman-Keuls Test and Tukey Test

- Omnibus Tests

- Scheffé Test

- Sign Test

- t Test, Independent Samples

- t Test, One Sample

- t Test, Paired Samples

- Tukey's Honestly Significant Difference (HSD)

- Welch's t Test

- Wilcoxon Rank Sum Test

- z Test

- Theories, Laws, and Principles

- Bayes's Theorem

- Central Limit Theorem

- Classical Test Theory

- Correspondence Principle

- Critical Theory

- Falsifiability

- Game Theory

- Gauss-Markov Theorem

- Generalizability Theory

- Grounded Theory

- Item Response Theory

- Occam's Razor

- Paradigm

- Positivism

- Probability, Laws of

- Theory

- Theory of Attitude Measurement

- Weber-Fechner Law

- Types of Variables

- Validity of Scores

- Loading...

Get a 30 day FREE TRIAL

-

Watch videos from a variety of sources bringing classroom topics to life

-

Read modern, diverse business cases

-

Explore hundreds of books and reference titles

Sage Recommends

We found other relevant content for you on other Sage platforms.

Have you created a personal profile? Login or create a profile so that you can save clips, playlists and searches