Entry

Reader's guide

Entries A-Z

Subject index

Factor Analysis: Exploratory

Introduction

Exploratory Factor Analysis (EFA) has long been a central technique in psychological research, as a powerful tool for reducing the complexity in a set of data. Its key idea is that the variability in a large sample of observed variables is dependent upon a restricted number of non-observable ‘latent’ constructs. This entry addresses key issues in EFA, such as: aims of EFA, basic equations, factor extraction and rotation, number of factors in a factor solution, factor measurement and replicability, assumptions, future perspectives.

Aims of Exploratory Factor Analysis

Exploratory Factor Analysis (EFA) has long been a central technique that has been widely used, since the beginning of the 20th century, in different fields of psychological research such as the study of mental abilities, of personality traits, of values and of beliefs, and the development of psychological tests (see Cattell, 1978; Comrey & Lee, 1992; Harman, 1976; McDonald, 1985). Its key idea is that the variability in a large sample of observed variables is dependent upon the action of a much-restricted number of non-observable ‘latent’ constructs. The aims of EFA are twofold: to reduce the dimensionality of the original set of variables, and to identify major latent dimensions (the factors) that explain the correlations among the observed variables. The starting point of an EFA is a matrix (R) of correlation coefficients (usually Pearson coefficients). The end is a matrix (A) that contains the correlations among the factors and the observed variables (called ‘factor loadings’): this is a rectangular matrix containing as many rows as the observed variables, and as many columns as the latent factors.

Basic Equations

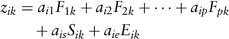

The basic idea of EFA is that a standard score on a variable can be expressed as a weighted sum of the latent factors, so that the following specification equation holds:

where zik is the standard score for a person k on the variable i; ai1 to aip, ais and aie are the loadings on, respectively, the common factors F, the specific factor S and the error factor E; F1k to Fpk, Sik and Eik are the standard scores of person k on, respectively, the common factors F, the specific factor S and the error factor E. While the common factors represent the variance that each variable shares with the other variables, the specific and error factors represent sources of variance that are unique for each variable.

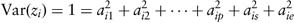

The equation above is a basis for ‘decomposing’ the R matrix into the product of two other matrices, the matrix of factor loadings (A) and its transpose (A′), so that R = AA′ (this is called the fundamental equation of Factor Analysis). The key idea here is that the original correlation matrix can be ‘reproduced’ from the factor solution. From this decomposition it is possible to derive the following equation, which relates the variance of a standardized variable zi to the factor loadings:

In sum, the total variance of a standard variable can be divided into a part that each variable shares with the other variables and that is explained by the p common factors (this part is called communality, and is equal to the sum of squared loadings for the variable on the common factors, h2ii = a2i1 + a2i2 + … + a2ip) and a part that is explained by the specific and the error factors (the combination of these two components is called uniqueness, u2ii = a2is + a2ie).

...

- 1. Theory and Methodology

- Ambulatory Assessment

- Assessment Process

- Assessor's Bias

- Automated Test Assembly Systems

- Classical and Modern Item Analysis

- Classical Test Theory

- Classification (General, including Diagnosis)

- Criterion-Referenced Testing: Methods and Procedures

- Cross-Cultural Assessment

- Decision (including Decision Theory)

- Diagnosis of Mental and Behavioural Disorders

- Diagnostic Testing in Educational Settings

- Dynamic Assessment (Learning Potential Testing, Testing the Limits)

- Ethics

- Evaluability Assessment

- Evaluation: Programme Evaluation (General)

- Explanation

- Factor Analysis: Confirmatory

- Factor Analysis: Exploratory

- Formats for Assessment

- Generalizability Theory

- History of Psychological Assessment

- Intelligence Assessment through Cohort and Time

- Item Banking

- Item Bias

- Item Response Theory: Models and Features

- Latent Class Analysis

- Multidimensional Item Response Theory

- Multidimensional Scaling Methods

- Multimodal Assessment (including Triangulation)

- Multitrait-Multimethod Matrices

- Needs Assessment

- Norm-Referenced Testing: Methods and Procedures

- Objectivity

- Outcome Assessment/Treatment Assessment

- Person/Situation (Environment) Assessment

- Personality Assessment through Longitudinal Designs

- Prediction (General)

- Prediction: Clinical vs. Statistical

- Qualitative Methods

- Reliability

- Report (General)

- Reporting Test Results in Education

- Self-Presentation Measurement

- Self-Report Distortions (including Faking, Lying, Malingering, Social Desirability)

- Test Adaptation/Translation Methods

- Test User Competence/Responsible Test Use

- Theoretical Perspective: Cognitive

- Theoretical Perspective: Cognitive-Behavioural

- Theoretical Perspective: Constructivism

- Theoretical Perspective: Psychoanalytic

- Theoretical Perspective: Psychological Behaviourism

- Theoretical Perspective: Psychometrics

- Theoretical Perspective: Systemic

- Trait-State Models

- Utility

- Validity (General)

- Validity: Construct

- Validity: Content

- Validity: Criterion-Related

- 2. Methods, Tests and Equipment

- Adaptive and Tailored Testing

- Analogue Methods

- Autobiography

- Behavioural Assessment Techniques

- Brain Activity Measurement

- Case Formulation

- Coaching Candidates to Score Higher on Tests

- Computer-Based Testing

- Equipment for Assessing Basic Processes

- Field Survey: Protocols Development

- Goal Attainment Scaling (GAS)

- Idiographic Methods

- Interview (General)

- Interview in Behavioural and Health Settings

- Interview in Child and Family Settings

- Interview in Work and Organizational Settings

- Neuropsychological Test Batteries

- Observational Methods (General)

- Observational Techniques in Clinical Settings

- Observational Techniques in Work and Organizational Settings

- Projective Techniques

- Psychoeducational Test Batteries

- Psychophysiological Equipment and Measurements

- Self-Observation (Self-Monitoring)

- Self-Report Questionnaires

- Self-Reports (General)

- Self-Reports in Behavioural Clinical Settings

- Self-Reports in Work and Organizational Settings

- Socio-Demographic Conditions

- Sociometric Methods

- Standard for Educational and Psychological Testing

- Subjective Methods

- Test Accommodations for Disabilities

- Test Anxiety

- Test Designs: Developments

- Test Directions and Scoring

- Testing through the Internet

- Unobtrusive Measures

- 3. Personality

- Anxiety Assessment

- Attachment

- Attitudes

- Attribution Styles

- Big Five Model Assessment

- Burnout Assessment

- Cognitive Styles

- Coping Styles

- Emotions

- Empowerment

- Interest

- Leadership Personality

- Locus of Control

- Motivation

- Optimism

- Person/Situation (Environment) Assessment

- Personal Constructs

- Personality Assessment (General)

- Personality Assessment through Longitudinal Designs

- Prosocial Behaviour

- Self-Control

- Self-Efficacy

- Self-Presentation Measurement

- Self, The (General)

- Sensation Seeking

- Social Competence (including Social Skills, Assertion)

- Temperament

- Time Orientation

- Trait-State Models

- Values

- Weil-Being (including Life Satisfaction)

- 4. Intelligence

- Attention

- Cognitive Ability: g Factor

- Cognitive Ability: Multiple Cognitive Abilities

- Cognitive Decline/Impairment

- Cognitive Plasticity

- Cognitive Processes: Current Status

- Cognitive Processes: Historical Perspective

- Cognitive/Mental Abilities in Work and Organizational Settings

- Creativity

- Dynamic Assessment (Learning Potential Testing, Testing the Limits)

- Emotional Intelligence

- Equipment for Assessing Basic Processes

- Fluid and Crystallized Intelligence

- Intelligence Assessment (General)

- Intelligence Assessment through Cohort and Time

- Language (General)

- Learning Disabilities

- Memory (General)

- Mental Retardation

- Practical Intelligence: Conceptual Aspects

- Practical Intelligence: Its Measurement

- Problem Solving

- Triarchic Intelligence Components

- Wisdom

- 5. Clinical and Health

- Anger, Hostility and Aggression Assessment

- Antisocial Disorders Assessment

- Anxiety Assessment

- Anxiety Disorders Assessment

- Applied Behavioural Analysis

- Applied Fields: Clinical

- Applied Fields: Gerontology

- Applied Fields: Health

- Caregiver Burden

- Child and Adolescent Assessment in Clinical Settings

- Clinical Judgement

- Coping Styles

- Counselling, Assessment in

- Couple Assessment in Clinical Settings

- Dangerous/Violence Potential Behaviour

- Dementia

- Diagnosis of Mental and Behavioural Disorders

- Dynamic Assessment (Learning Potential Testing, Testing the Limits)

- Eating Disorders

- Health

- Identity Disorders

- Interview in Behavioural and Health Settings

- Irrational Beliefs

- Learning Disabilities

- Mental Retardation

- Mood Disorders

- Observational Techniques in Clinical Settings

- Outcome Assessment/Treatment Assessment

- Palliative Care

- Prediction: Clinical vs. Statistical

- Psychoneuroimmunology

- Quality of Life

- Self-Observation (Self-Monitoring)

- Self-Reports in Behavioural Clinical Settings

- Social Competence (including Social Skills, Assertion)

- Stress

- Substance Abuse

- Test Anxiety

- Thinking Disorders Assessment

- Type A: A Proposed Psychosocial Risk Factor for Cardiovascular Diseases

- Type C: A Proposed Psychosocial Risk Factor for Cancer

- 6. Educational and Child Assessment

- Achievement Testing

- Applied Fields: Education

- Child Custody

- Children with Disabilities

- Coaching Candidates to Score Higher on Tests

- Cognitive Psychology and Assessment Practices

- Communicative Language Abilities

- Development (General)

- Development: Intelligence/Cognitive

- Development: Language

- Development: Psychomotor

- Development: Socio-Emotional

- Diagnostic Testing in Educational Settings

- Dynamic Assessment (Learning Potential Testing, Testing the Limits)

- Evaluation in Higher Education

- Giftedness

- Instructional Strategies

- Interview in Child and Family Settings

- Item Banking

- Learning Strategies

- Performance

- Performance Standards: Constructed Response Item Formats

- Performance Standards: Selected Response Item Formats

- Planning

- Planning Classroom Tests

- Pre-School Children

- Psychoeducational Test Batteries

- Reporting Test Results in Education

- Standard for Educational and Psychological Testing

- Test Accommodations for Disabilities

- Test Directions and Scoring

- Testing in the Second Language in Minorities

- 7. Work and Organizations

- Achievement Motivation

- Applied Fields: Forensic

- Applied Fields: Organizations

- Applied Fields: Work and Industry

- Career and Personnel Development

- Centres (Assessment Centres)

- Cognitive/Mental Abilities in Work and Organizational Settings

- Empowerment

- Interview in Work and Organizational Settings

- Job Characteristics

- Job Stress

- Leadership in Organizational Settings

- Leadership Personality

- Motor Skills in Work Settings

- Observational Techniques in Work and Organizational Settings

- Organizational Culture

- Performance

- Personnel Selection, Assessment in

- Physical Abilities in Work Settings

- Risk and Prevention in Work and Organizational Settings

- Self-Reports in Work and Organizational Settings

- Total Quality Management

- 8. Neurophysiopsychological Assessment

- Applied Fields: Neuropsychology

- Applied Fields: Psychophysiology

- Brain Activity Measurement

- Dementia

- Equipment for Assessing Basic Processes

- Executive Functions Disorders

- Memory Disorders

- Neuropsychological Test Batteries

- Outcome Evaluation in Neuropsychological Rehabilitation

- Psychoneuroimmunology

- Psychophysiological Equipment and Measurements

- Visuo-Perceptual Impairments

- Voluntary Movement

- 9. Environmental Assessment

- Behavioural Settings and Behaviour Mapping

- Cognitive Maps

- Couple Assessment in Clinical Settings

- Environmental Attitudes and Values

- Family

- Landscapes and Natural Environments

- Life Events

- Organizational Structure, Assessment of

- Perceived Environmental Quality

- Person/Situation (Environment) Assessment

- Post-Occupancy Evaluation for the Built Environment

- Residential and Treatment Facilities

- Social Climate

- Social Networks

- Social Resources

- Stressors: Physical

- Stressors: Social

- Loading...

Get a 30 day FREE TRIAL

-

Watch videos from a variety of sources bringing classroom topics to life

-

Read modern, diverse business cases

-

Explore hundreds of books and reference titles

Sage Recommends

We found other relevant content for you on other Sage platforms.

Have you created a personal profile? Login or create a profile so that you can save clips, playlists and searches