Entry

Reader's guide

Entries A-Z

Subject index

Mathematical Theory of Communication

Claude Shannon's mathematical theory of communication concerns quantitative limits of mediated communication. The theory has a history in cryptography and of measuring telephone traffic. Paralleling work by U.S. cybernetician Norbert Wiener and Soviet logician Andrei N. Kolmogorov, the theory was first published after declassification in 1948. Due to Wilbur Schramm's initiative, it appeared in 1949 as a book with a brief commentary by Warren Weaver. The theory provided a scientific foundation to the emerging discipline of communication, but is now recognized as addressing only parts of the field.

For Shannon, “The fundamental problem of communication is reproducing at one point either exactly or approximately a message selected at another point” (Shannon & Weaver, 1949, p. 3). Shannon did not want to confound his theory by psychological issues and considered meanings irrelevant to the problem of using, analyzing, and designing mediated communication. The key to Shannon's theory is that messages are distinguished by selecting them from a set of possible messages—whatever criteria determine that choice. His theory has 22 theorems and seven appendices. Its basic idea is outlined as follows.

The Basic Measure

Arguably, informed choices are better than chance, and selecting a correct answer from among many possible answers to a question is more difficult and requires more information than selecting one from among few. For example, guessing the name of a person is more difficult than guessing his or her gender. So his or her name would provide more information than his or her gender, the former often implying information about the latter. Intuitively, communication that eliminates all alternatives conveys more information than one that leaves some of them uncertain. Furthermore, two identical messages should provide the information of any one, and two different messages should provide more information than either by itself.

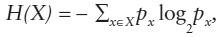

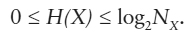

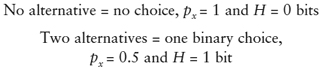

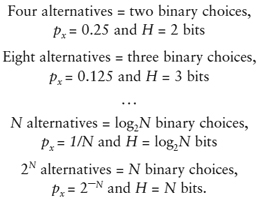

To define quantities associated with selecting messages, in his second theorem, Shannon proved that the logarithm function was the only one that conforms to the above intuitions. Logarithms increase monotonically with the number of alternatives available for selection and are additive when alternatives are multiplicative. Although the base of this logarithm is arbitrary, Shannon set it to two, thereby acknowledging that the choice among two equally likely alternatives—answering a yes or no question or turning a switch on or off—is the most elementary choice conceivable. His basic measure, called entropy H, is

where px is the probability of message x occurring in the set of possible messages X. The minus sign assures that entropies are positive quantities. With Nx as the size of the set X of possible messages, H's range is

H averages the number of binary choices needed to select one message from a larger set, or the number of binary digits, bits for short, needed to enumerate that set. H is interpretable as a measure of uncertainty, variation, disorder, ignorance, or lack of information. When alternatives are equally likely,

Entropies and Communication

The additivity of H gives rise to a calculus of communication. For a sender S and a receiver R one can measure three basic entropies.

1. The uncertainty of messages s at sender S, occurring with probability p<>

...

- Applications and Contexts

- Advertising Theories

- Argumentation Theories

- Broadcasting Theories

- Campaign Communication Theories

- Communication Across the Life Span

- Communication in Later Life

- Communication Skills Theories

- Community

- Competence Theories

- Computer-Mediated Communication

- Conflict Communication Theories

- Corporate Campaign Theories

- Cultivation Theory

- Cultural Theories of Health Communication

- Deliberative Democratic Theories

- Entertainment-Education

- Environmental Communication Theories

- Ethics Theories

- Family and Marital Schemas and Types

- Family Communication Theories

- Film Theories

- Gay, Lesbian, Bisexual, and Transgender Theories

- Globalization Theories

- Group Communication Theories

- Groupthink

- Health Communication Theories

- Humorous Communication Theory

- Informatization

- Intercultural Communication Theories

- International Communication Theories

- International Development Theories

- Journalism and Theories of the Press

- Learning and Communication

- Legal Communication Theories

- Media and Mass Communication Theories

- Medium Theory

- Negotiation Theory

- Ordinary Democracy

- Organizational Communication Theories

- Political Communication Theories

- Religious Communication Theories

- Visual Communication Theories

- Critical Orientations

- Action-Implicative Discourse Analysis

- Activity Theory

- Americanization of Media

- Archeology and Genealogy

- Autoethnography

- Black Feminist Epistemology

- Chicana Feminism

- Citizenship

- Co-Cultural Theory

- Complexity and Communication

- Critical Communication Pedagogy

- Critical Constructivism

- Critical Discourse Analysis

- Critical Ethnography

- Critical Organizational Communication

- Critical Race Theory

- Critical Rhetoric

- Critical Theory

- Cultural Studies

- Deconstruction

- Diaspora

- Digital Divide

- Discourse Theory and Analysis

- Existentialism

- Feminist Communication Theories

- Feminist Rhetorical Criticism

- Feminist Standpoint Theory

- Flow and Contra-Flow

- Frankfurt School

- French Feminism

- Gay, Lesbian, Bisexual, and Transgender Theories

- Gender and Media

- Genderlect Theory

- Hermeneutics

- Hybridity

- Identity Theories

- Ideological Rhetoric

- Ideology

- Interracial Communication

- Intersectionality

- Marxist Theory

- Materiality of Discourse

- Media Sovereignty

- Medium Theory

- Muted Group Theory

- Neocolonialism

- New Media Theory

- Positioning Theory

- Postcolonial Feminism

- Postcolonial Theory

- Postmodern Theory

- Poststructuralism

- Power and Power Relations

- Privilege

- Propaganda Theory

- Public Sphere

- Queer Theory

- Racial Formation Theory

- Silence, Silences, and Silencing

- Social Justice

- Spectatorship

- Structuration Theory

- Transculturation

- Vernacular Discourse

- Whiteness Theory

- Womanism

- Cultural Orientations

- Afrocentricity

- Asian Communication Theory

- Black Feminist Epistemology

- Buddhist Communication Theory

- Chicana Feminism

- Chinese Harmony Theory

- Chronemics

- Co-Cultural Theory

- Community

- Community of Practice

- Confucian Communication Theory

- Contextual Theory of Interethnic Communication

- Critical Ethnography

- Critical Race Theory

- Cross-Cultural Adaptation Theory

- Cultivation Theory

- Cultural Contracts Theory

- Cultural Performance Theory

- Cultural Studies

- Cultural Theories of Health Communication

- Cultural Types Theories

- Culture and Communication

- Diaspora

- Effective Intercultural Workgroup Communication Theory

- Ethnography of Communication

- Ethnomethodology

- Face Negotiation Theory

- Fans, Fandom, and Fan Studies

- Feminist Standpoint Theory

- Gay, Lesbian, Bisexual, and Transgender Theories

- Gender Role Theory

- Genderlect Theory

- Hindu Communication Theory

- Hybridity

- Identity Theories

- Indian Rasa Theory

- Informatization

- Intercultural Communication Competence

- Intercultural Communication Theories

- Interpretive Theory

- Interracial Communication

- Intersectionality

- Japanese Kuuki Theory

- Latino Perspectives

- Linguistic Relativity

- Medium Theory

- Membership Categorization Analysis (MCA)

- Myth and Mythic Criticism

- Neocolonialism

- Organizational Culture

- Performance Ethnography

- Performance Theories

- Popular Culture Theories

- Postcolonial Theory

- Privilege

- Proxemics

- Racial Formation Theory

- Religious Communication Theories

- Silence, Silences, and Silencing

- Social Identity Theory

- Social Justice

- Speech Codes Theory

- Taoist Communication Theory

- Transculturation

- Values Theory: Sociocultural Dimensions and Frameworks

- Vernacular Discourse

- Whiteness Theory

- Womanism

- Cybernetic and Systems Orientations

- Actor-Network Theory

- Autoethnography

- Co-Orientation Theory

- Complexity and Communication

- Convergence Theory

- Coordinated Management of Meaning

- Cybernetics

- Dual-Level Connectionist Models of Group Cognition and Social Influence

- Functional Group Communication Theory

- Information Theory

- Mathematical Theory of Communication

- Metacommunication

- Organizational Co-Orientation Theory

- Organizing, Process of

- Palo Alto Group

- Pragmatics

- Relational Control Theory

- Relational Dialectics

- Stakeholder Theory

- Structuration Theory

- System Theory

- Feminist Orientations

- Black Feminist Epistemology

- Chicana Feminism

- Feminist Communication Theories

- Feminist Rhetorical Criticism

- Feminist Standpoint Theory

- French Feminism

- Gender and Media

- Gender Role Theory

- Gender Schema Theory

- Genderlect Theory

- Intersectionality

- Invitational Rhetoric

- Muted Group Theory

- Postcolonial Feminism

- Power and Power Relations

- Queer Theory

- Womanism

- Group and Organizational Concepts

- Actor-Network Theory

- Bona Fide Group Theory

- Campaign Communication Theories

- Co-Orientation Theory

- Collective Information Sampling

- Community

- Community of Practice

- Corporate Campaign Theories

- Creativity in Groups

- Critical Organizational Communication

- Cross-Cultural Decision Making

- Dual-Level Connectionist Models of Group Cognition and Social Influence

- Effective Intercultural Workgroup Communication Theory

- Field Theory of Conflict

- Functional Group Communication Theory

- Group Communication Theories

- Groupthink

- Health Communication Theories

- Institutional Theories of Organizational Communication

- Interaction Process Analysis

- Leadership Theories

- Media Richness Theory

- Membership Categorization Analysis (MCA)

- Organizational Co-Orientation Theory

- Organizational Communication Theories

- Organizational Control Theory

- Organizational Culture

- Organizational Identity Theory

- Organizational Socialization and Assimilation

- Organizing, Process of

- Sense-Making

- Social Identity Theory

- Stakeholder Theory

- Symbolic-Interpretive Perspective on Groups

- Information, Media, and Communication Technology

- Activation Theory of Information Exposure

- Advertising Theories

- Affect-Dependent Theory of Stimulus Arrangements

- Agenda-Setting Theory

- Americanization of Media

- Audience Theories

- Broadcasting Theories

- Campaign Communication Theories

- Communication in Later Life

- Computer-Mediated Communication

- Corporate Campaign Theories

- Critical Theory

- Cultivation Theory

- Cultural Studies

- Diaspora

- Diffusion of Innovations

- Digital Divide

- Discourse Theory and Analysis

- Documentary Film Theories

- Entertainment-Education

- Environmental Communication Theories

- Expectancy Violations Theory

- Fans, Fandom, and Fan Studies

- Film Theories

- Flow and Contra-Flow

- Framing Theory

- Frankfurt School

- Gender and Media

- Globalization Theories

- Health Communication Theories

- Information Theory

- Informatization

- International Development Theories

- Interpretive Communities Theory

- Journalism and Theories of the Press

- Marxist Theory

- Materiality of Discourse

- Media and Mass Communication Theories

- Media Democracy

- Media Diplomacy

- Media Effects Theories

- Media Equation Theory

- Media Ethics Theories

- Media Richness Theory

- Media Sovereignty

- Medium Theory

- Membership Categorization Analysis (MCA)

- Motivated Information Management Theory

- Neocolonialism

- Network Society

- New Media Theory

- New World Information and Communication Order (NWICO)

- Political Communication Theories

- Popular Culture Theories

- Postcolonial Theory

- Presence Theory

- Propaganda Theory

- Public Opinion Theories

- Public Sphere

- Social Action Media Studies

- Social Identity Theory

- Social Information Processing Theory

- Spectatorship

- Spiral Models of Media Effects

- Spiral of Silence

- Two-Step and Multi-Step Flow

- Uses, Gratifications, and Dependency

- Violence and Nonviolence in Media

- International and Global Concepts

- Interpersonal Concepts

- Accommodation Theory

- Accounts and Account Giving

- Action Assembly Theory

- Action-Implicative Discourse Analysis

- Agency

- Anxiety/Uncertainty Management Theory

- Argumentativeness, Assertiveness, and Verbal Aggressiveness Theory

- Attachment Theory

- Attribution Theory

- Chronemics

- Cognitive Dissonance Theory

- Collective Information Sampling

- Communibiology

- Communication Goal Theories

- Communication in Later Life

- Communication Skills Theories

- Communication Theory of Identity

- Competence Theories

- Compliance Gaining Strategies

- Conflict Communication Theories

- Constructivism

- Conversation Analysis

- Conversational Constraints Theory

- Coordinated Management of Meaning

- Cross-Cultural Adaptation Theory

- Cultural Contracts Theory

- Deception Detection

- Dialogue Theories

- Diffusion of Innovations

- Discourse Theory and Analysis

- Dyadic Power Theory

- Elaboration Likelihood Theory

- Emotion and Communication

- Empathy

- Ethnomethodology

- Expectancy Violations Theory

- Face Negotiation Theory

- Facework Theories

- Family and Marital Schemas and Types

- Family Communication Theories

- Field Theory of Conflict

- Genderlect Theory

- General Semantics

- Grounded Theory

- Hawaiian Ho'oponopono Theory

- Health Communication Theories

- Heuristic-Systematic Model

- I and Thou

- Immediacy

- Impression Formation

- Impression Management

- Inoculation Theory

- Interaction Adaptation Theory

- Interaction Involvement

- Intercultural Communication Competence

- Interpersonal Communication Theories

- Interpersonal Deception Theory

- Invitational Rhetoric

- Kinesics

- Learning and Communication

- Metacommunication

- Motivated Information Management Theory

- Negotiation Theory

- Nonverbal Communication Theories

- Palo Alto Group

- Paralanguage

- Persuasion and Social Influence Theories

- Politeness Theory

- Power, Interpersonal

- Privacy Management Theory

- Problematic Integration Theory

- Proxemics

- Reasoned Action Theory

- Relational Control Theory

- Relational Development

- Relational Dialectics

- Relational Maintenance Theory

- Rhetorical Sensitivity

- Rogerian Dialogue Theory

- Rules Theories

- Self-Categorization Theory

- Self-Disclosure

- Sense-Making

- Social and Communicative Anxiety

- Social Construction of Reality

- Social Exchange Theory

- Social Information Processing Theory

- Social Interaction Theories

- Social Judgment Theory

- Social Penetration Theory

- Social Support

- Speech Act Theory

- Stigma Communication

- Stories and Storytelling

- Style, Communicator

- Symbolic Convergence Theory

- Symbolic Interactionism

- Trait Theory

- Two-Step and Multi-Step Flow

- Uncertainty Management Theories

- Uncertainty Reduction Theory

- Non-Western Orientations

- Paradigms, Traditions, and Schools

- Afrocentricity

- Asian Communication Theory

- Buddhist Communication Theory

- Cognitive Theories

- Communibiology

- Communication Skills Theories

- Constitutive View of Communication

- Critical Theory

- Empiricism

- Feminist Communication Theories

- Humanistic Perspective

- Modernism in Communication Theory

- Philosophy of Communication

- Postmodern Theory

- Postpositivism

- Poststructuralism

- Pragmatics

- Rules Theories

- Scientific Approach

- Social Interaction Theories

- System Theory

- Traditions of Communication Theory

- Variable Analytic Tradition

- Philosophical Orientations

- Psycho-Cognitive Orientations

- Accommodation Theory

- Action Assembly Theory

- Activation Theory of Information Exposure

- Activity Theory

- Affect-Dependent Theory of Stimulus Arrangements

- Agency

- Anxiety/Uncertainty Management Theory

- Argumentativeness, Assertiveness, and Verbal Aggressiveness Theory

- Attachment Theory

- Attitude Theory

- Attribution Theory

- Audience Theories

- Chronemics

- Co-Orientation Theory

- Cognitive Dissonance Theory

- Cognitive Theories

- Communibiology

- Communication Across the Life Span

- Communication and Language Acquisition and Development

- Communication in Later Life

- Competence Theories

- Compliance Gaining Strategies

- Constructivism

- Cross-Cultural Adaptation Theory

- Cultivation Theory

- Diffusion of Innovations

- Dual-Level Connectionist Models of Group Cognition and Social Influence

- Dyadic Power Theory

- Elaboration Likelihood Theory

- Emotion and Communication

- Empathy

- Expectancy Violations Theory

- Face Negotiation Theory

- Family and Marital Schemas and Types

- Field Theory of Conflict

- Gender and Biology

- Gender Schema Theory

- General Semantics

- Heuristic-Systematic Model

- Humorous Communication Theory

- Immediacy

- Impression Formation

- Inoculation Theory

- Interaction Adaptation Theory

- Interaction Involvement

- Interaction Process Analysis

- Intercultural Communication Competence

- Interpersonal Deception Theory

- Intrapersonal Communication Theories

- Leadership Theories

- Learning and Communication

- Linguistic Relativity

- Meaning Theories

- Media Effects Theories

- Motivated Information Management Theory

- Negotiation Theory

- Nonverbal Communication Theories

- Persuasion and Social Influence Theories

- Politeness Theory

- Power, Interpersonal

- Privacy Management Theory

- Problematic Integration Theory

- Public Opinion Theories

- Reasoned Action Theory

- Religious Communication Theories

- Rhetorical Sensitivity

- Self-Categorization Theory

- Self-Disclosure

- Sense-Making

- Social and Communicative Anxiety

- Social Exchange Theory

- Social Information Processing Theory

- Social Judgment Theory

- Social Penetration Theory

- Spiral of Silence

- Style, Communicator

- Trait Theory

- Uncertainty Management Theories

- Uncertainty Reduction Theory

- Uses, Gratifications, and Dependency

- Values Studies: History and Concepts

- Rhetorical Orientations

- Agency

- Argumentation Theories

- Classical Rhetorical Theory

- Critical Rhetoric

- Dramatism and Dramatistic Pentad

- Genre Theory

- Hermeneutics

- Identification

- Ideological Rhetoric

- Invitational Rhetoric

- Metaphor

- Myth and Mythic Criticism

- Narrative and Narratology

- Organizational Control Theory

- Political Communication Theories

- Religious Communication Theories

- Rhetorical Sensitivity

- Symbolic Convergence Theory

- Visual Communication Theories

- Semiotic, Linguistic, and Discursive Orientations

- Accounts and Account Giving

- Action-Implicative Discourse Analysis

- Activity Theory

- Actor-Network Theory

- Archeology and Genealogy

- Argumentation Theories

- Autoethnography

- Chronemics

- Classical Rhetorical Theory

- Constitutive View of Communication

- Conversation Analysis

- Conversational Constraints Theory

- Critical Discourse Analysis

- Cultural Studies

- Deconstruction

- Ethnomethodology

- Feminist Rhetorical Criticism

- Genderlect Theory

- General Semantics

- Genre Theory

- Hermeneutics

- Identification

- Ideological Rhetoric

- Interpretive Theory

- Intrapersonal Communication Theories

- Kinesics

- Language and Communication

- Linguistic Relativity

- Materiality of Discourse

- Meaning Theories

- Metacommunication

- Metaphor

- Narrative and Narratology

- Neocolonialism

- Nonverbal Communication Theories

- Paralanguage

- Politeness Theory

- Popular Culture Theories

- Positioning Theory

- Poststructuralism

- Proxemics

- Semiotics and Semiology

- Silence, Silences, and Silencing

- Speech Act Theory

- Speech Codes Theory

- Stories and Storytelling

- Symbolic Convergence Theory

- Symbolic Interactionism

- Visual Communication Theories

- Social-Interactional Orientations

- Accounts and Account Giving

- Action-Implicative Discourse Analysis

- Activity Theory

- Actor-Network Theory

- Agency

- Agenda-Setting Theory

- Audience Theories

- Autoethnography

- Bona Fide Group Theory

- Co-Orientation Theory

- Communication and Language Acquisition and Development

- Communication Theory of Identity

- Community

- Community of Practice

- Consequentiality of Communication

- Constitutive View of Communication

- Conversation Analysis

- Conversational Constraints Theory

- Coordinated Management of Meaning

- Cultural Performance Theory

- Dialogue Theories

- Diffusion of Innovations

- Discourse Theory and Analysis

- Dramatism and Dramatistic Pentad

- Ethnomethodology

- Facework Theories

- Framing Theory

- Functional Group Communication Theory

- Gender Role Theory

- Grounded Theory

- Hawaiian Ho'oponopono Theory

- I and Thou

- Identification

- Identity Theories

- Immediacy

- Impression Management

- Interpersonal Deception Theory

- Interpretive Communities Theory

- Intrapersonal Communication Theories

- Invitational Rhetoric

- Leadership Theories

- Meaning Theories

- Membership Categorization Analysis (MCA)

- Negotiation Theory

- Nonverbal Communication Theories

- Organizational Co-Orientation Theory

- Organizational Control Theory

- Organizational Culture

- Organizing, Process of

- Palo Alto Group

- Performance Theories

- Politeness Theory

- Positioning Theory

- Postmodern Theory

- Poststructuralism

- Privacy Management Theory

- Privilege

- Proxemics

- Relational Control Theory

- Relational Development Theories

- Relational Dialectics

- Relational Maintenance

- Rogerian Dialogue Theory

- Rules Theories

- Social Action Media Studies

- Social Construction of Reality

- Social Identity Theory

- Social Interaction Theories

- Social Penetration Theory

- Speech Act Theory

- Spiral of Silence

- Stories and Storytelling

- Structuration Theory

- Symbolic Convergence Theory

- Symbolic Interactionism

- Symbolic-Interpretive Perspective on Groups

- Values Studies: History and Concepts

- Values Theory: Sociocultural Dimensions and Frameworks

- Theory, Metatheory, Methodology, and Inquiry

- Autoethnography

- Conversation Analysis

- Critical Discourse Analysis

- Critical Ethnography

- Definitions of Communication

- Discourse Theory and Analysis

- Epistemology

- Ethics Theories

- Ethnography of Communication

- Ethnomethodology

- Evaluating Communication Theory

- Feminist Rhetorical Criticism

- Genre Theory

- Grounded Theory

- Hermeneutics

- Humanistic Perspective

- Inquiry Processes

- Interpretive Theory

- Metatheory

- Modernism in Communication Theory

- Myth and Mythic Criticism

- Ontology

- Performative Writing

- Phenomenology

- Philosophy of Communication

- Postpositivism

- Practical Theory

- Realism and the Received View

- Scientific Approach

- Stories and Storytelling

- Theory

- Traditions of Communication Theory

- Validity and Reliability

- Variable Analytic Tradition

- Loading...

Get a 30 day FREE TRIAL

-

Watch videos from a variety of sources bringing classroom topics to life

-

Read modern, diverse business cases

-

Explore hundreds of books and reference titles

Sage Recommends

We found other relevant content for you on other Sage platforms.

Have you created a personal profile? Login or create a profile so that you can save clips, playlists and searches